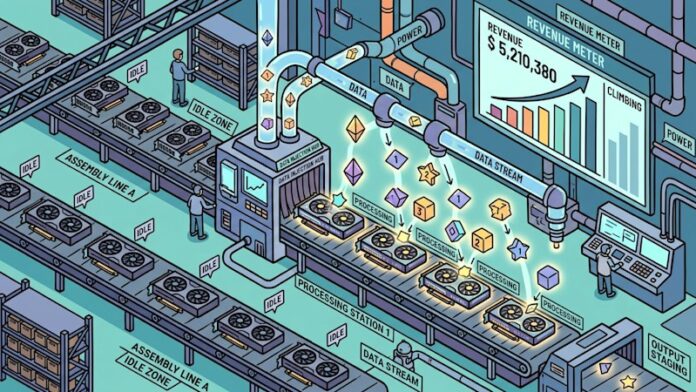

The modern AI landscape is marked by periods of wasted potential. GPU clusters, the backbone of machine learning, often sit idle between training runs or shifting workloads, yet operators continue to incur costs for power and cooling. FriendliAI, founded by the researcher behind the widely adopted continuous batching technique, is launching InferenceSense, a platform designed to monetize this previously lost capacity. Instead of letting powerful hardware sit dark, InferenceSense allows neocloud operators to sell access to unused GPU cycles for AI inference tasks, splitting the revenue in real time.

The Core Problem: Wasted Resources

GPU clusters are inherently inefficient. Training jobs finish, demand fluctuates, and expensive hardware remains inactive while fixed costs accumulate. Spot GPU markets exist, but they require cloud vendors to rent out capacity, leaving the technical burden and inference stack optimization to the end user. FriendliAI’s approach is fundamentally different: it enables operators to directly monetize idle GPUs by running inference workloads. This bypasses traditional rental models and unlocks a new revenue stream.

Continuous Batching: The Foundation of Efficiency

The technology driving InferenceSense stems from the work of Byung-Gon Chun, a professor at Seoul National University whose research on continuous batching revolutionized AI inference. His 2020 paper, Orca, introduced a dynamic processing method that avoids waiting for full batches before executing requests. This technique, now industry standard and implemented in the popular vLLM inference engine, maximizes throughput.

Chun’s core innovation is now being applied to the problem of GPU underutilization. The goal is to fill idle cycles with paid inference workloads, optimizing for token throughput and sharing revenue with the operator.

How InferenceSense Works: Seamless Integration

InferenceSense integrates directly with existing Kubernetes infrastructure, which most neocloud operators already use for resource orchestration. Operators allocate GPU pools to a FriendliAI-managed cluster, defining availability and reclaim conditions. When GPUs are unused, the platform spins up isolated containers to serve inference workloads for open-weight models like DeepSeek, Qwen, and Kimi.

The handoff is designed to be seamless: when the operator’s scheduler needs hardware back, inference workloads are preempted within seconds. FriendliAI handles demand aggregation through direct clients and aggregators like OpenRouter, ensuring a consistent flow of jobs. Real-time dashboards provide operators with transparency into model usage, token processing, and earned revenue.

Token Throughput vs. Raw Capacity: A Crucial Distinction

Traditional spot GPU markets monetize raw capacity. InferenceSense, however, monetizes token throughput, meaning operators earn based on actual AI processing, not just idle hardware. FriendliAI claims its engine delivers two to three times the throughput of standard vLLM deployments, thanks to a custom-built C++ stack and proprietary GPU kernels that bypass Nvidia’s cuDNN library.

This increased efficiency translates directly into higher revenue per unused cycle. Operators can earn more by filling idle time with inference than by simply renting out raw capacity.

Implications for AI Engineers and Cost Optimization

For AI engineers, the rise of platforms like InferenceSense could shift the economics of inference. As neoclouds monetize idle capacity, they may be incentivized to lower token prices to remain competitive. While still early, this trend could drive down API costs for popular models like DeepSeek and Qwen over the next year.

The key takeaway is that infrastructure decisions are evolving. Neoclouds are becoming more attractive not just for price and availability but also for their potential to leverage unused capacity and pass those savings on to customers.

Ultimately, InferenceSense represents a paradigm shift in GPU utilization. By turning wasted cycles into revenue, FriendliAI is reshaping the economics of AI inference.